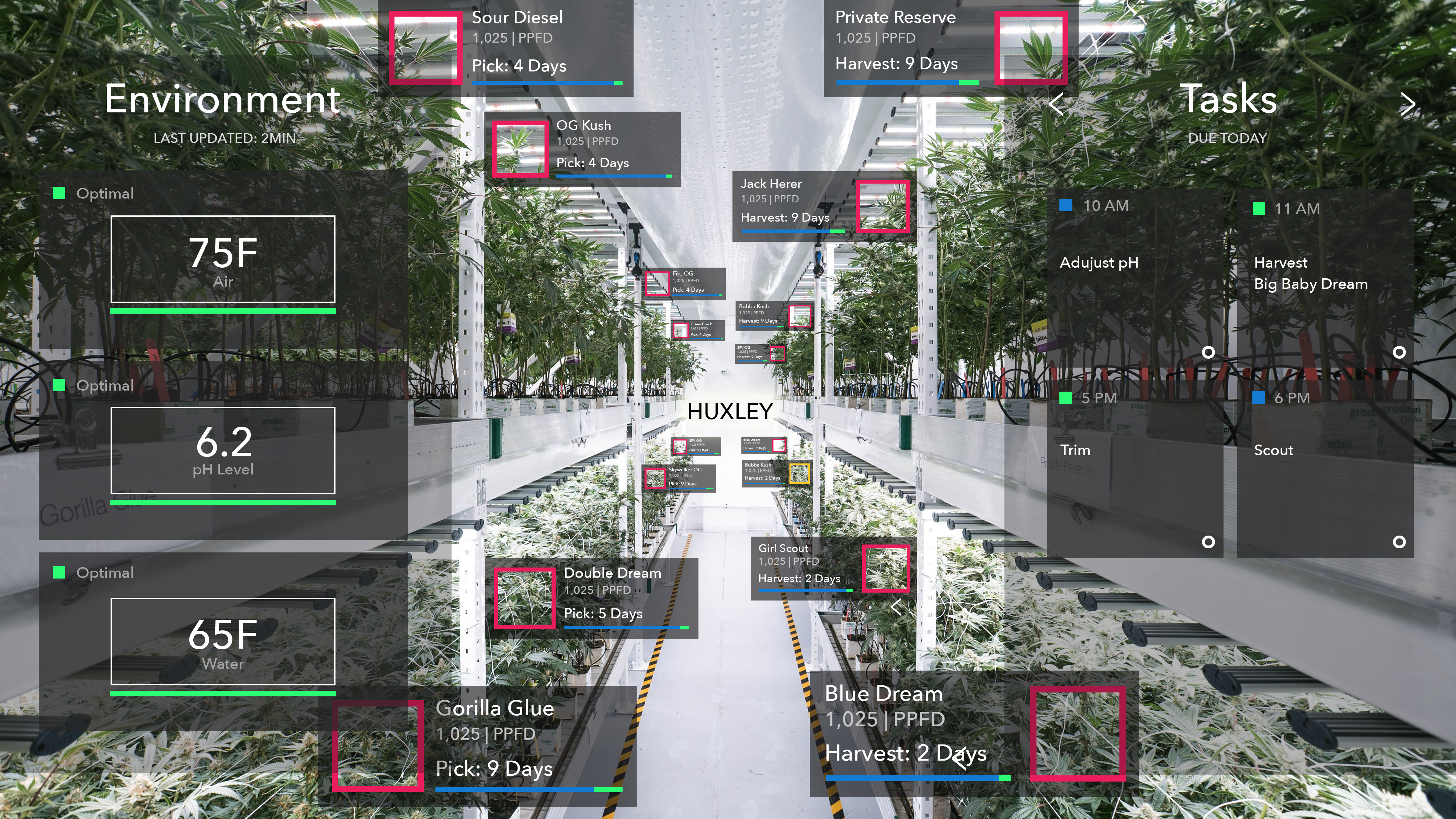

The first artificial intelligence (AI) enabled augmented reality crop management system may be coming to an indoor farm near you very soon. Huxley combines machine learning, computer vision and an augmented reality interface to essentially allow anyone to be a master farmer.

With the help of a wearable technology like Google Glass, the user is presented with information about the plants in any indoor farm. Huxley’s AI aims to detect and diagnose visual anomalies and then suggest an action to mitigate the issue while correlating it with environmental data to determine the cause. And since AI keeps getting smarter with every harvest, founder Ryan Hooks says it won’t be long until the world’s greatest expert on hydroponic growing isn’t a human.

Hooks has had a varied career working in the media space for large tech companies like Google and Vevo, as well as working on food issues with Food Inc and the G8 Summit. In 2014, he founded Isabel, a smart grow system for the growth and transportation of produce indoors, and debuted Huxley’s Plant Vision platform in 2016.

We got into the weeds with Hooks about how Huxley will work, how much it will cost, and how quickly it could get smarter than today’s master farmers.

What is the status of the product right now?

I’ve been developing this for the last two years. We have 22 pilots ready from cannabis to big greenhouses and vertical farms. People already want the system, we just have to connect the capital to get our team in place, so we can conquer these pilots.

A lot of these data-based solutions for ag need a certain amount of scale to be effective. What kind of scale does your product require?

We install infrared and RGB cameras in a facility. That can be monitoring 1,000 square feet of vegetables or a couple cannabis plants, or it could monitor a $15,000 orchid. If you want, think of it as artificial intelligence (AI) plant insurance. On the plant level, the economics of it aside, if you’re growing a plant, let’s say this orchid, and you have this AI plant insurance from Huxley and we’re taking a photo every minute and scanning it for diseases or any anomalies and then we are pulling in environmental data from the sensors. It can be literally one plant. So if I have a couple plants or a hundred or a thousand or ten thousand, every time that crop grows and you find the best flavor and the best yield, you can visually and environmentally know what the conditions were that made that.

What will the pricing structure be?

Depending on which crop and the specification of the greenhouse, we’re going to charge per square foot for the AI and then for the augmented reality there will be a monthly service fee that will tie in with our main system. Just the AI itself is going to self optimize over time so the more greenhouses and types of crops we’re growing, the more data we’ll be able to share.

Where are you sourcing the initial recipes that will give the AI a base of plant knowledge to build upon?

We’re utilizing data sets from academic institutions that train our AI to know what to look for. One of the interesting things with Huxley is that we’ve been developing a backend for what’s called ‘supervised learning.’ So master growers and academics in the world can train the AI in what the optimal scenario looks like; what diseases look like and anomalies too, so as we train the system, it will get smarter.

This is all done through visual learning correct?

What we’re doing is visual, but our database is tracking from the seed to shipping and shows the air, light, and water conditions, and then we correlate that with our vision.

How would it perceive something like taste or flavor anomalies?

If the crop is not in ideal condition, it will know that. If the lettuce is becoming more yellow or light green, or off the optimal visual path, eventually you’ll just know how to self-correct that. So if it’s a nutrient deficiency or an environmental scenario, it will know. You tell Huxley that this was bad lettuce and then Huxley knows that all those images in that whole data set becomes a good reference for what not to grow.

Doesn’t that leave the door open for a false correlation?

As it is supervised by academics and master growers, these correlations will start to make more sense. The autopilot of the greenhouse is controlling the air, light and water conditions. If the nutrient pH is fine and the environment is at optimal settings, you’re not going to run into that. But if it did happen, we would be able to correlate why it happened and it would get better over time.

Does Huxley have the potential to get smarter than the smartest farmer out there?

With any computer vision system or machine learning system, the data that goes into it is very important. It needs to be supervised. It needs to be catalogued in an appropriate way. As you train it or [for example] as more self driving cars go down the road, they’re creating a better map for the world around them. What Huxley is doing is that the information, as the grower is growing, is tracked through machine learning and the computer vision. Then eventually the confidence level of that goes up and it becomes better than the best grower. The end goal is to create an AI that can take the stress off of all these variables and simplify the process for the farmer.

Can you estimate a timeline for that?

I would say one-to-two years per plant species. It will never be 100%, but for scouting in a greenhouse, the best human might be at an 80% confidence level, because even the best human going through the facility is going to miss what might be going on and can’t do it 24 hours a day. Once you pass that 80% threshold, then it’s doing a better job than the best person you could hire.

It sounds like focusing on one plant species would be the fastest way to see what it can really do. Is there a reason that your pilot program is so varied?

The system that we’re making is called plant vision so whatever growers are working with, they’ll be able to start training their own data sets. We’re starting with cannabis because it’s the most economically viable, but if it’s an orchid or a high-margin, high-value item, those are gonna be the best plants to start with. And also for R&D, the cycles are going to be more important. Your going to learn a lot quicker on 30 days cycles than you are on 90.

Is there really a $15,000 orchid out there somewhere?

Yes, if you look up high-priced flowers there’s an orchid that sold for £160,000 ($207k). So we could do AI orchid insurance. Saffron is $900 per pound! The second a bug comes in and tries to damage your saffron crop, we’ll zap it with a laser.

Image: MedMen cannabis cultivation facility featuring LED lights from Fluence Bioengineering with AR. Image: Huxley